Orchestrating Inference: How Kubernetes, Ray, and vLLM Coordinate Under the Hood

A deep dive into how Kubernetes, Ray, and vLLM coordinate to transform independent GPUs into a synchronized inference machine.

A deep dive into how Kubernetes, Ray, and vLLM coordinate to transform independent GPUs into a synchronized inference machine.

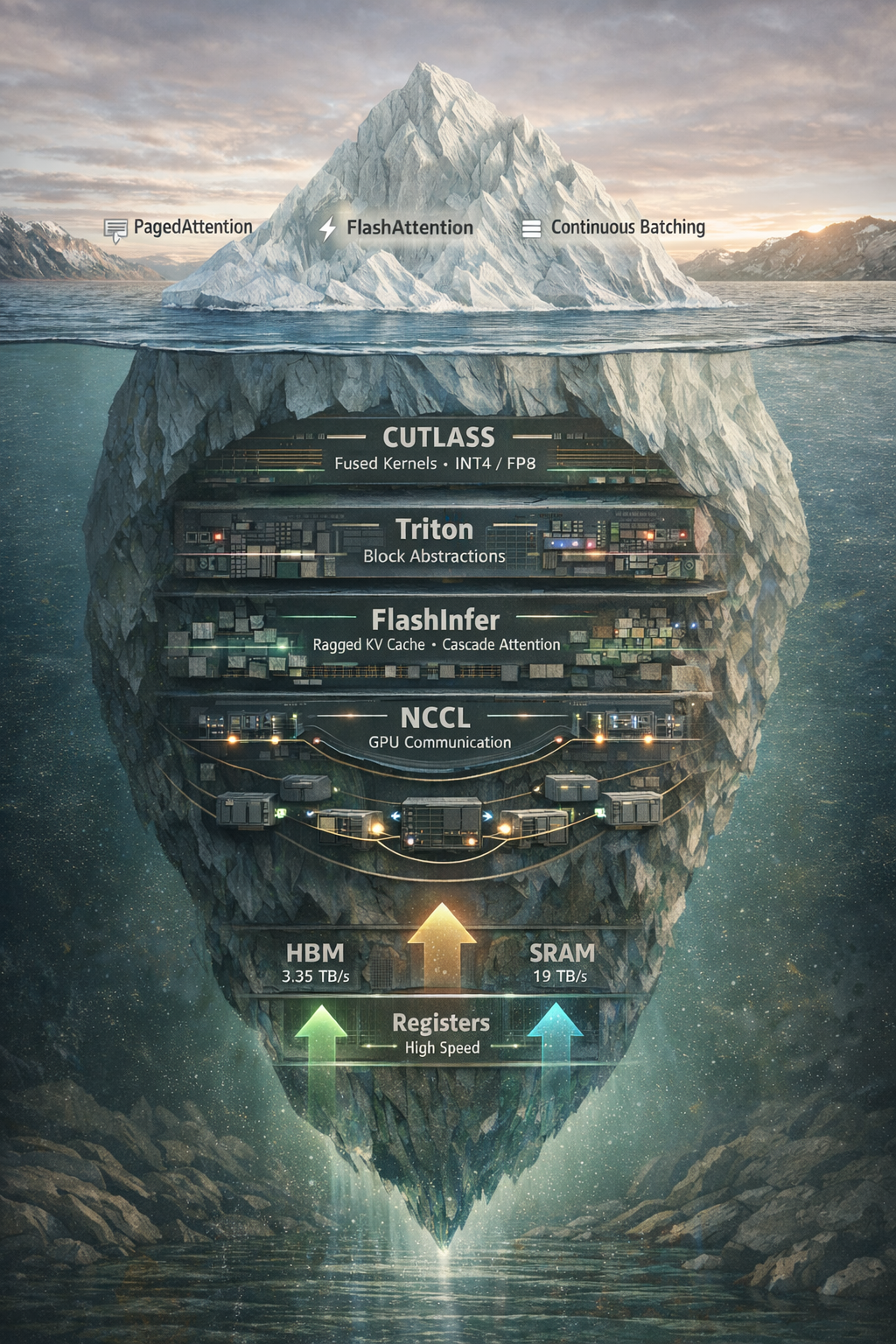

Beyond vLLM and PagedAttention: exploring NCCL, CUTLASS, Triton, and FlashInfer, the libraries that actually make LLM inference fast.